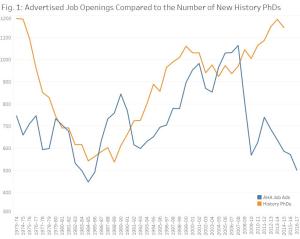

This week, the American Historical Association previewed a forthcoming report on the number of full-time history jobs. The post is entitled “Another Tough Year for the Academic Job Market in History”—which is a bit misleading, since it documents the continuation of a decade-long collapse. In the last hiring year (2016-2017), employers advertised only 289 tenure-track faculty positions and 212 other full-time jobs in the AHA Career Center. During that same year, to judge by the recent past, American universities probably granted more than 1,000 new doctorates in history.

This week, the American Historical Association previewed a forthcoming report on the number of full-time history jobs. The post is entitled “Another Tough Year for the Academic Job Market in History”—which is a bit misleading, since it documents the continuation of a decade-long collapse. In the last hiring year (2016-2017), employers advertised only 289 tenure-track faculty positions and 212 other full-time jobs in the AHA Career Center. During that same year, to judge by the recent past, American universities probably granted more than 1,000 new doctorates in history.

Author Archives: Jonathan Wilson

IOTAR50: Intellectual History from the Undistinguished

We continue “The Ideological Origins of the American Revolution at 50,” our joint roundtable with the S-USIH blog. Today’s post is by Jonathan Wilson, an adjunct faculty member at the University of Scranton and Marywood University. He studies ways that intellectuals—elite and otherwise—articulated American national identity in eastern cities during the early nineteenth century.

Upon first reading The Ideological Origins of the American Revolution, I found it liberating. (That places me alongside Michael and Sara more than Ken this week.) True, Bernard Bailyn’s book was yet another attempt to credit elite white men for an idealistic national founding. From my perspective at the time, however, it modeled a way to study the ideas of relatively ordinary people. Bailyn depicted revolutionary thoughts as the work of communities, not individuals. He showed me that the life of the mind can encompass the inarticulate, the half-said, even the irrational, in ways that historians can analyze. This was powerful.

“Mixing the Sacred Character, With That of the Statesman”: Review of Pulpit and Nation

Spencer W. McBride, Pulpit and Nation: Clergymen and the Politics of Revolutionary America (Charlottesville and London: University of Virginia Press, 2016).

The relationship between Christianity and the American founding is a topic of obvious contemporary political relevance in the United States. It is also a field in which historians during the last few years have labored with great energy.[1] In Pulpit and Nation: Clergymen and the Politics of Revolutionary America, Spencer McBride adds to that labor with a book that is—at first glance—less politically charged than some other contributions have been. Yet Pulpit and Nation advances what may be a subversive claim. Continue reading

The relationship between Christianity and the American founding is a topic of obvious contemporary political relevance in the United States. It is also a field in which historians during the last few years have labored with great energy.[1] In Pulpit and Nation: Clergymen and the Politics of Revolutionary America, Spencer McBride adds to that labor with a book that is—at first glance—less politically charged than some other contributions have been. Yet Pulpit and Nation advances what may be a subversive claim. Continue reading

Writing History As If It Matters (to Lots of People)

In a series of classic science fiction stories, Isaac Asimov imagined a scientific discipline called “psychohistory”: a way to predict the future of an interstellar empire. Psychohistory could not foresee individual choices, but it could supposedly predict collective behavior over the course of millennia. At one point in the Foundation series, however, a charismatic figure named the Mule threatened to upend psychohistory’s predictions: he was a mutant, acting in ways the original model could not anticipate. In the universe Asimov imagined, the Mule alone seemed to possess true individual agency. Resisting a powerful model of human behavior, he offered instead a story about a person.

In a series of classic science fiction stories, Isaac Asimov imagined a scientific discipline called “psychohistory”: a way to predict the future of an interstellar empire. Psychohistory could not foresee individual choices, but it could supposedly predict collective behavior over the course of millennia. At one point in the Foundation series, however, a charismatic figure named the Mule threatened to upend psychohistory’s predictions: he was a mutant, acting in ways the original model could not anticipate. In the universe Asimov imagined, the Mule alone seemed to possess true individual agency. Resisting a powerful model of human behavior, he offered instead a story about a person.

Identity and the Founders: A Response to Mark Lilla

This weekend, Mark Lilla, a historian of ideas at Columbia University, published a New York Times op-ed on “identity liberalism.” Reacting to the outcome of the presidential election, Lilla argues that contemporary American liberalism’s celebration of diversity, however morally salutary in private life, has been politically suicidal at the national level. “National politics in healthy periods is not about ‘difference,'” Lilla writes; “it is about commonality. And it will be dominated by whoever best captures Americans’ imaginations about our shared destiny.”

This weekend, Mark Lilla, a historian of ideas at Columbia University, published a New York Times op-ed on “identity liberalism.” Reacting to the outcome of the presidential election, Lilla argues that contemporary American liberalism’s celebration of diversity, however morally salutary in private life, has been politically suicidal at the national level. “National politics in healthy periods is not about ‘difference,'” Lilla writes; “it is about commonality. And it will be dominated by whoever best captures Americans’ imaginations about our shared destiny.”

Lilla’s argument is a response—one of several possible responses—to what I see as a real problem. In contemporary America, demands for inclusion, equality, and dignity often seem to be made in the name of particular groups rather than in the name of the common good. Whether this perception is accurate is another matter. I won’t address that complicated question here. But Lilla’s perspective on early American history warrants a critical response.

Making a Webpage for a Conference Paper

I recently had to cancel a trip to a conference. My panel is continuing without me; the chair has graciously offered to read my paper in my place. Partly because of this, I am doing something I haven’t done before: putting together a companion webpage for the presentation.

I recently had to cancel a trip to a conference. My panel is continuing without me; the chair has graciously offered to read my paper in my place. Partly because of this, I am doing something I haven’t done before: putting together a companion webpage for the presentation.

Making companion webpages does not seem to be a widespread practice yet at history conferences, but I do know historians who have done it. For other people who are interested in the idea, I thought I would talk through what I am doing, keeping in mind that many presenters may not have extensive experience making webpages.

What’s Livetweeting For, Anyway?

Last week, an anonymous Ph.D. student published a Guardian op-ed under the headline “I’m a serious academic, not a professional Instagrammer.” Among other complaints, the author (a laboratory scientist) condemned the practice of livetweeting academic conferences. Livetweeters care less about disseminating new knowledge, Anonymous wrote, than about making self-promotional displays: Look at me taking part in this event.

Last week, an anonymous Ph.D. student published a Guardian op-ed under the headline “I’m a serious academic, not a professional Instagrammer.” Among other complaints, the author (a laboratory scientist) condemned the practice of livetweeting academic conferences. Livetweeters care less about disseminating new knowledge, Anonymous wrote, than about making self-promotional displays: Look at me taking part in this event.

I hate to admit it, but the author may have a point. When I shared the article, one of my friends, an anthropologist, observed that she finds livetweeting “baffling” because she would rather listen—and be listened to—than be distracted during a conference talk. Katrina Gulliver, an influential advocate of Twitter use by historians, told me (via, yes, Twitter) that she no longer approves of conference livetweeting either. “Staring at screens is uncollegial,” she argued; it interferes with face-to-face discussions, and the value of the information passed along is dubious too, because “tweets present (or misrepresent) work in [a] disconnected, out of context way.” Bradley Proctor told me he has had one of his talks misrepresented by a livetweeter—a particularly sensitive issue for someone who researches Reconstruction-era racial violence.

Surely these are important concerns. It seems to me that conference livetweeters—yours truly included—need to get better at articulating explicit objectives and boundaries if we’re going to take these risks. So what do people say about the way they use Twitter at conferences?

What Do Early Americanists Offer the Liberal Arts?—Part II

Last week, in the first part of this post, I argued that we tend to justify the liberal arts in two potentially contradictory ways. First, we assert that the liberal arts offer tools for citizenship. Second, we claim they point our way to human values that transcend any community. I argued that both of these justifications or approaches are necessary. I also suggested that early Americanists have not found it easy to explain what we contribute to the second approach.

Last week, in the first part of this post, I argued that we tend to justify the liberal arts in two potentially contradictory ways. First, we assert that the liberal arts offer tools for citizenship. Second, we claim they point our way to human values that transcend any community. I argued that both of these justifications or approaches are necessary. I also suggested that early Americanists have not found it easy to explain what we contribute to the second approach.

Today, therefore, I am taking up the question I posed last week. Does early American scholarship offer anything distinctive to the liberal arts as a way of understanding humanity at large? Continue reading

What Do Early Americanists Offer the Liberal Arts?

Perhaps because the traditional academic year has ended, and probably in part because of the tides and undertows of the current election, we seem to be awash just now in excellent essays about the purposes and state of the humanities.

To do my part to put a stop to that, I am here to ask what the liberal arts have to do with early American studies.

I suspect we tend to take the relationship too much for granted.

“Terrorism” in the Early Republic

Late last week, Americans learned about an armed takeover of a federal wildlife refuge in Oregon. It was initiated by a group of men who have an idiosyncratic understanding of constitutional law and a sense that they have been cheated and persecuted by the United States government. The occupation comes during a time of general unease about national security and fairness in policing. As a result, some critics have been calling the rebels “domestic terrorists,” mostly on hypothetical grounds. One of their leaders, on the other hand, told NBC News that they see themselves as resisting “the terrorism that the federal government is placing upon the people.”

Late last week, Americans learned about an armed takeover of a federal wildlife refuge in Oregon. It was initiated by a group of men who have an idiosyncratic understanding of constitutional law and a sense that they have been cheated and persecuted by the United States government. The occupation comes during a time of general unease about national security and fairness in policing. As a result, some critics have been calling the rebels “domestic terrorists,” mostly on hypothetical grounds. One of their leaders, on the other hand, told NBC News that they see themselves as resisting “the terrorism that the federal government is placing upon the people.”

I do not propose to address the Oregon occupation directly. However, since the topic keeps coming up lately, this seems like a good opportunity to examine the roles the word terrorism has played in other eras. As it turns out, Americans have been calling each other terrorists a long time.